Push it!

-

Amber Y. Infrastructure Engineer

- Sep 8, 2010

At Yelp, we push new code live every day. Pushing daily allows us to quickly prototype new features and squash bugs in a proactive manner. Because we aim to deploy new code so often, we’re always looking for ways to make the process efficient and painless.

There are four main stages to the Yelp push process: code review, integration, testing, and finally, live deployment. Each step is important, and there are ways to maximize the efficiency of all of them.

Code Review

This first stage of the push process happens before a push request is even made: all code destined for the live site is subject to a full review by at least one other developer. For more expansive changes, reviewers with relevant experience or responsibility are added to the review as well. Code review gives us a place to spot silly mistakes, suggest alternative methods of accomplishing certain goals, and ensure that we maintain uniform code standards. By involving engineers who maintain relevant portions of the code base, it also keeps them informed of what’s going on with areas of the code for which they are responsible. Furthermore, we’ve found that code review helps drive a sense of community as we discover and share tips, tricks, and lessons that we’ve encountered throughout the development process.

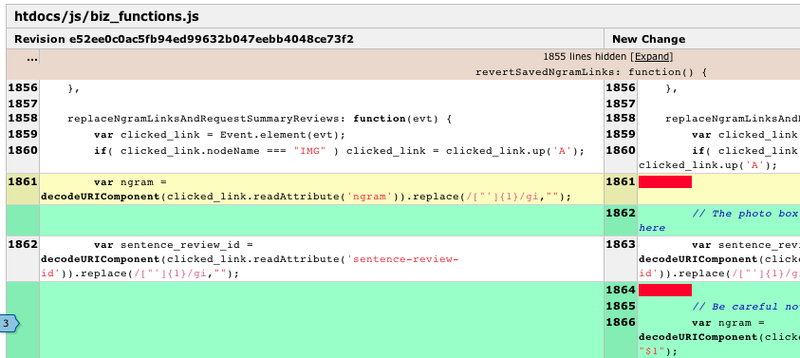

We use the excellent open-source Review Board to simplify the process. With features like the ability to add comments inline on diffs and compare different versions of diffs themselves, Review Board has proven itself a very helpful tool.

Integration

Yelp now uses Git for version control - we transitioned from Subversion a few months ago, but that’s a story for another blog post. Developers work in feature branches, pulling in changes from master at their own leisure. Before their code is added to a push, however, we always rebase the individual feature branches with all of the latest changes in master, to ensure that nothing conflicts with code that previously went live. In addition, each feature branch is squashed down to a single atomic commit, to make any necessary reverts easier.

When we were smaller, coordinating requests for pushing changes was handled via email. As our team grew, however, it became apparent that we needed a more central and consistent way of tracking the push process. We didn’t find any existing solutions that really fit our needs, so we developed an in-house Google App Engine app to track pending requests and the status of the current push stages - we’ve open sourced it as PushmasterApp on GitHub. The app also provides reporting functionality to make it easier for us to go back and review who pushed what, when.

Testing

Yelp doesn’t employ a dedicated QA team. As a result, we rely heavily on a combination of automated testing and engineer-prepared test plans for new features. Turnaround time for squashing bugs on code that we’re trying to push is significantly shorter, since the engineers who write the tests are the same engineers who write the code.

Automated Regression Testing

With hundreds of thousands of lines of code in the Yelp repository, trying to test for regressions by hand wouldn’t just be arduous, it’d be impossible. While new features and bug fixes are verified by the engineer who wrote them, we also employ a suite of over 10,000 test cases (built on Testify, the open-source testing framework we developed in-house) to check for potential issues with a build. We also run a number of Selenium tests to test client-side functionality on the front end.

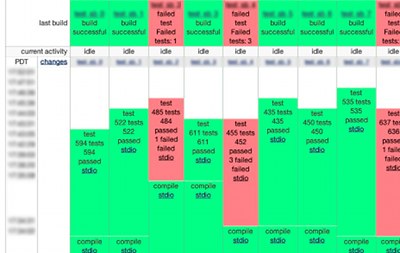

While engineers often run individual test cases or test suites in their own development environment, running the entire battery of tests would be taxing on any individual system. Instead, we distribute the process to a number of machines using BuildBot.

Staging Environment

We maintain a set of staging servers that mirror the configuration of the live servers as much as possible. Each candidate build is deployed to the stage machines for manual verification by the developers who have changes in that push. Before we push to production, each developer and product manager must verify that their changes are present and working properly. Only after everyone involved has signed off on the build does it go live.

Handling Problems

If a changeset causes issues, the pushmaster has two options:

- Get a fix from the developer on the spot.

- Pull the changeset out of the current push, to be re-pushed later. In practice, unless a given changeset is high-priority (a fix for a critical bug, or a new feature that has a deadline), we’ve found that pulling the changeset is generally the more efficient option. Holding up the overall push process while a single engineer works out a fix for an issue wastes the time of many engineers. Opting to just pull out problematic changesets allows us to decrease push times, and thus be able to push more often. This in turn decreases the time before the next push, so even if a changeset is pulled it can be re-pushed again soon after if the fix was simple.

Going Live

Once all of the involved parties have verified their changes and all our regression tests pass, the chosen build is sent from the staging servers directly to production. A key aspect of this process is that it enforces stage testing before code is pushed live - it’s impossible to push code directly to production. This protects us from accidentally pushing unknown code to production.

Once a build is deployed to the production servers, the active version is switched over to the new build. The pushmaster and engineers involved in the push continue to monitor our error logs to make sure the build is stable. After the build has continued to appear stable for a reasonable period of time, the deployment branch is added back into the master branch, marking the new changes as the base for all future development.

While the overall process doesn’t iterate quite as quickly as, say, continuous deployment, we’ve found that it offers a good balance between build iteration time and developer involvement in testing. What we given up in speed we gain back again in being able to quickly check the kinds of changes that can be hard to write comprehensive test cases for but are trivial for a developer to verify.