Journey to Zero Trust Access

-

Carlos B. Hernandez, Software Engineer; Adam Skalicky, Software Engineer

- Apr 15, 2025

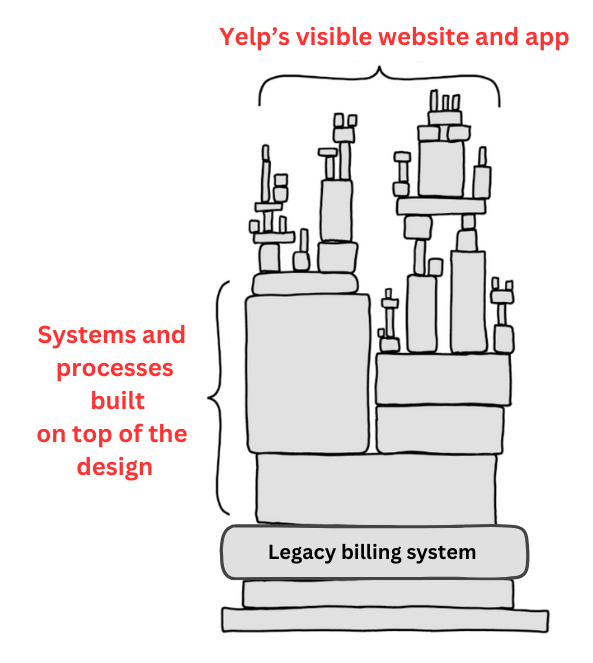

Glossary ZTA: zero trust architecture SAML: security assertion markup language (an SSO facilitation protocol) Devbox: a remote server used to develop software Zero Trust Access Remote Future Yelp is now a fully remote company, which means our employee base has become increasingly distributed across the world, making secure access to resources from anywhere a critical business function. Yelp historically used Ivanti Pulse Secure as the employee VPN, but due to the need for a more reliable solution, it became clear that a change was necessary to ensure secure and consistent access to internal resources. The Corporate Systems and Client Platform...